AI-Avatars as Assisted Technology for Neurodivergent Individuals in Asymmetrical Intelligent Interviewing Systems

- Dec 7, 2025

- 5 min read

Updated: Dec 8, 2025

When I re-entered the traditional job market after almost a decade away, I was shocked to see a completely new ecosystem of algorithms, ranking, parsing, and filtering. This disturbing trend bled into my Carnegie Mellon University application today for a PhD program, where I was required to submit a video essay with only 3 tries, each new take deleting the prior.

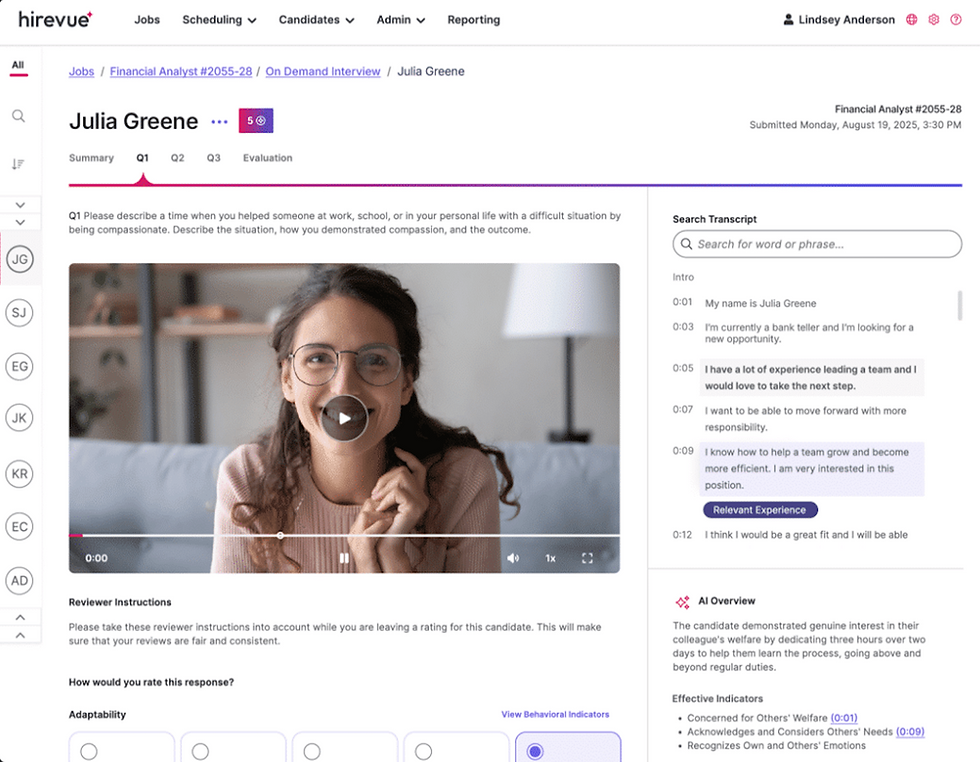

This behavior propagates a newer phenomenon occurring with the exponential rise of artificial intelligence to optimize workflows, "intelligent" or "one-way" interviewing. Typically in this model, a third party mediates the interviewing process between a hiring company and a prospective employee. The applicant is given a prompt, sometimes on the spot, and is instructed to record their response for submission. That recording is then reviewed by the hiring company and either analyzed by a human for parameter targets or by an AI system. The latter is the more unsettling part of this process. Let me take this step by step.

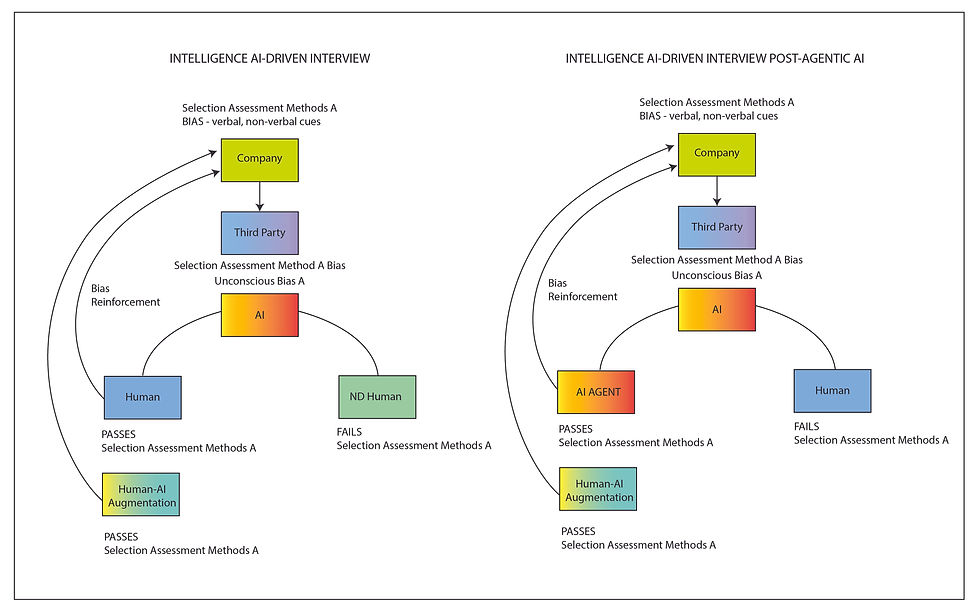

First, I created a diagram to visually communicate the shift in power structure from a traditional conversational interview to an AI-driven intelligent interview, as shown below.

The traditional interview process between two conversing humans can lead to an emergent change in unconscious bias for each party. Multiple interviewers at a single company also increases the diversity of views and biases. On the other hand, in the AI-driven interview process, there is only a one-way interaction: an AI evaluates the applicant's submitted recording and provides an assessment. This AI is driven by an opaque proprietary algorithm that most likely utilizes deep learning to recognize verbal and non-verbal patterns for assessment. Dena F. Mujtaba and Nihar R. Mahapatra at the University of Michigan published an excellent paper addressing the fairness conflict within these systems. As Figure 1 illustrates, this embedded bias poses the greatest harm in terms of scalability. It is a two-tiered problematic structure for equity: a loss of conversation — where potential emergent bias reversal can occur —and an unregulated system that amplifies opaque biases on a vast scale.

This doesn't even address the conflict of an asymmetrical data and surveillance interaction between parties with large power differentials. The act of requiring consent for the appropriation of behavior, likeness, and other aspects of the video is an infringement of our basic human rights. This creates a massive potential for abuse and systemic damage, which is further detailed in Cathy O'Neil's book Weapons of Math Destruction (2016).

Furthermore, there is an ableist bias in interviewing processes concerning neurodivergent (ND) individuals specifically. Metrics for assessment rely on these verbal and non-verbal patterns, which are reinforced by neurotypical behavior among the main pool of applicants. For instance, it is common for a person with Autistic Spectrum Disorder (ASD) to have difficulty maintaining eye contact, giving concise responses in a short period to unanticipated questions, maintaining clarity when anxious, or exhibiting other stimming behaviors. Referencing the Society for Human Resource Management (SHRM) Selection Assessment Methods (Fig. 3), there clearly exists a systemic bias towards neurotypical behavior, such as eye contact, voice inflection, professional demeanor, and appearance. There are more advantages for a ND person in a one-on-one human interview session than in a one-way recording because there are environmental opportunities for stimming, reduced awareness of real-time lag in responses, and mirroring potential.

Without access to these lifelines in an interview, a ND interviewer is at an even further disadvantage. Even if safeguards and evaluations are applied to third-party private companies such as Hirevue, there will always be a conflict between marginalized communities and the majority in deep learning-driven interview assessments because, by nature, the minority is an outlier within the majority-weighted pattern. Considering this disadvantage, how does a ND person compete in a difficult job market when they continuously fail to pass assessment filters due to an inability to perform the correct verbal and non-verbal cues due to their disability?

I propose the use of a coupled ND human-AI avatar that can augment their real-time digital performance to output the desired attributes. Given this ambitious proposition, I want to address the ethical question: Is an augmented representation dishonest? I want to highlight a few additional points about AI-driven interview processes before answering this.

First, this system is an economically driven one and does not exist in a vacuum. It is pertinent to provide context for the motivation to move in this technological direction. In our post-Fordist labor market, there was a net loss of American jobs because of automation (MIT News, 2004). 'White-collar' work, mostly augmented by automation, was on the rise as physical labor opportunities declined. With the rise of AI agents, this work will now be displaced by artificial intelligence. Labor arbitrage is defined by Beam, a company that supplies agentic AI for automated tasks, as:

"In simple words, labor arbitrage can be understood as strategically sourcing talent from regions where equivalent skills are available at reduced costs, generating significant savings opportunities without compromising deliverable quality.

Synonyms for “labor arbitrage” include offshoring, outsourcing, foreign outsourcing, international outsourcing, and exploiting lower labor costs.

The definition has undergone remarkable evolution over recent decades, catalyzed by globalization, technological breakthroughs, and transformative economic policies that have redefined workforce management paradigms."

Building on this economic model, agentic-AI will be in direct competition with humans in the job market, and it's not looking good for humans. In a global system that prioritizes labor arbitrage, the current problematic hiring structure is going to evolve (Fig. 4).

As agentic AI continues to grow exponentially, it will expand the range of work it can replace. No guardrails currently exist to discourage the mass displacement of human workers to more cost-effective AI agents. Following this theoretical labor shift, I posit humans will no longer be able to compete without AI-augmentation. I am currently working on allocating resources to further research how a symbiotic human-AI could be theoretically and practically designed for privacy, security, and equity.

Recentering AI control to the individual will leverage enhanced augmentation to increase autonomy and opportunity in a post-AI landscape. As agentic AI replaces workers, offices will go empty, physical workspaces will change, and company culture will see a massive paradigm shift. If an agentic AI can dynamically evolve its being for optimal hiring in AI-driven interviewing processes, why can't a human? To maximize profit, AI agents will most certainly take on virtual human or humanoid robotic forms to perform customer-facing work. These artificial representations still need to compete and meet required metrics; thus, human-AI augmented entities can still compete against agentic AI in virtual spaces.

This brings me to my final judgement: ND people can fairly use avatar augmentation in digitally dominant spaces requiring verbal and non-verbal performance. It would not only serve as assistive technology for people with this disability; it might also be required for any human competing against an agent in the future. I illustrate in Figure 5 how this shift in performance and competition can be visualized.

Both systems in Figure 5 utilize the intelligent interviewing processes, which I project will only reinforce systemic ableism and unconscious bias. I want to note--inference is dependent on centralized assessment metrics through the third party AI.

Overall, the process of 'intelligent interviewing' is, by default, problematic and should be regulated not only for current inclusion of marginalized populations but also for all humans in the not-so-distant future.

Looks like I need to get started training my own avatar..LFG

Comments